I am publishing all my work at https://jontromanab.github.com. If you cannot find any code regarding my project please contact me. The reason may be I am working on that. I will happy to upload the codes as soon as possible.

Here is Dissertation proposal file

Here is Dissertation proposal file

| dissertation.pdf | |

| File Size: | 4576 kb |

| File Type: | |

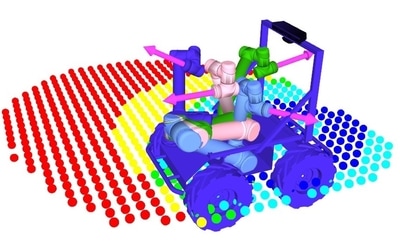

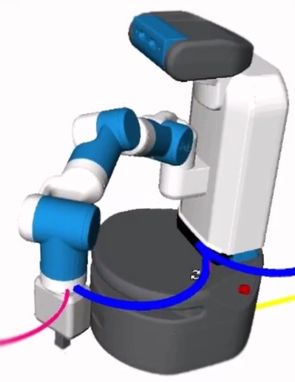

Large surface Cleaning

For cleaning Large surfaces a novel controller is created which consists of Robot arm, torso and the base. The controller is implemented in OpenRave. To control a ROS-controlled robot by openrave, a library FetchPy is created, which bridges openrave and ROS directly. The motion generation for cleaning pattern is taken care of by a package called FetchWBP.

https://github.com/jontromanab/fetchpy

https://github.com/jontromanab/fetchwbp

https://github.com/jontromanab/fetchpy

https://github.com/jontromanab/fetchwbp

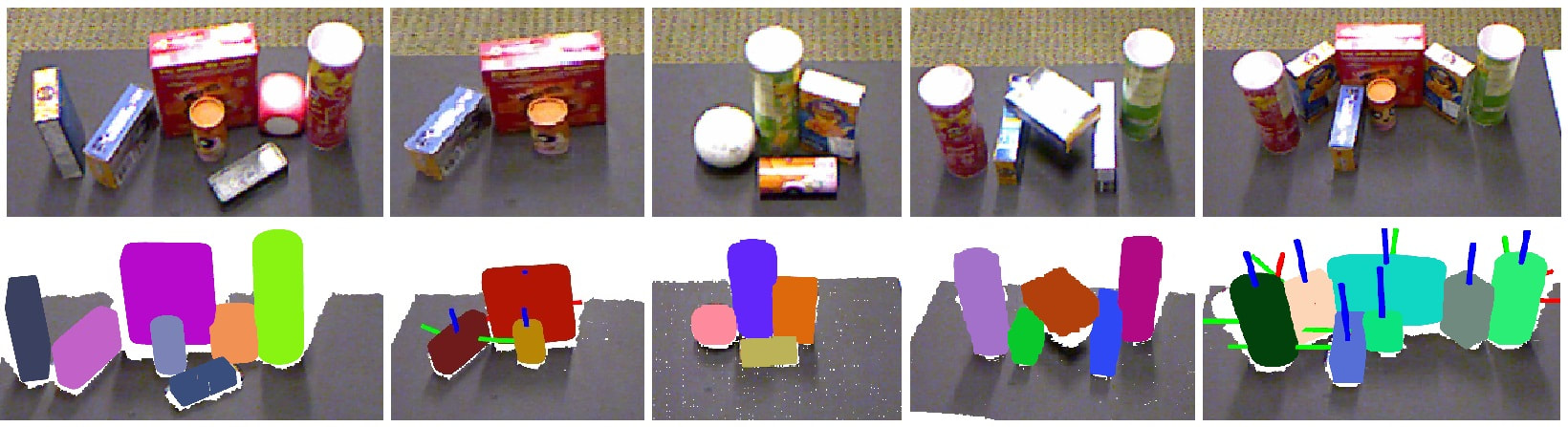

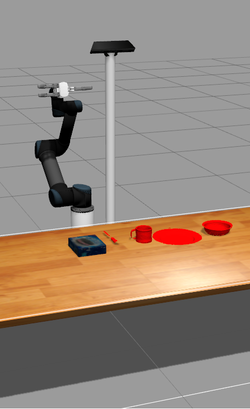

Superquadrics Grasping

My main research is focused on Robotic Grasping by superquadric representation. After perceiving a set of unknown objects, we filter the objects on the table, segment individual objects and try to mirror them to obtain a full estimation. Later we fit superquadric models on completed point cloud set. A novel grasping algorithm is been implemented to obtain antipodal grasping points directly on the superquadrics.

More about the project can be found at: https://github.com/jontromanab/sq_grasp

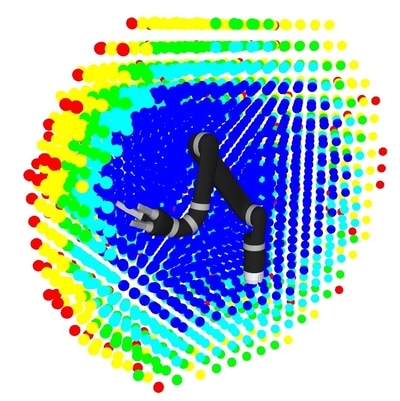

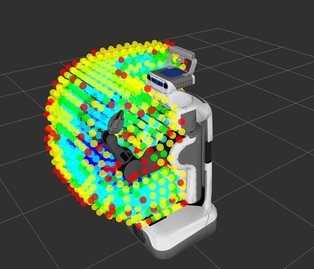

Reuleaux

In the summer of 2016, I have worked with Open Source Robotics Foundation(OSRF) in Google Summer of Code for a project called reuleaux, which creates reachability map of any given robot manipulator, directly from URDF. Later the reachability maps are utilized to find optimal base placement poses for a given task.

More Information and Instructions for setting up package can be found at:

http://wiki.ros.org/reuleaux

The main repository of Reuleaux: https://github.com/ros-industrial-consortium/reuleaux

Also A different version of Reuleaux that used MoveIT to construct reachability map considering self-collisions and joint limits: https://github.com/jontromanab/reuleaux_moveit

Arxiv Version:

More Information and Instructions for setting up package can be found at:

http://wiki.ros.org/reuleaux

The main repository of Reuleaux: https://github.com/ros-industrial-consortium/reuleaux

Also A different version of Reuleaux that used MoveIT to construct reachability map considering self-collisions and joint limits: https://github.com/jontromanab/reuleaux_moveit

Arxiv Version:

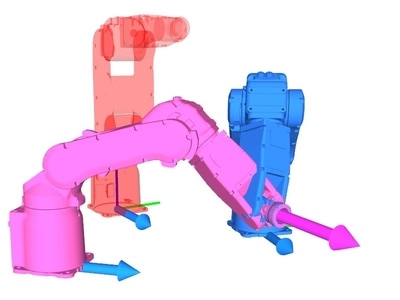

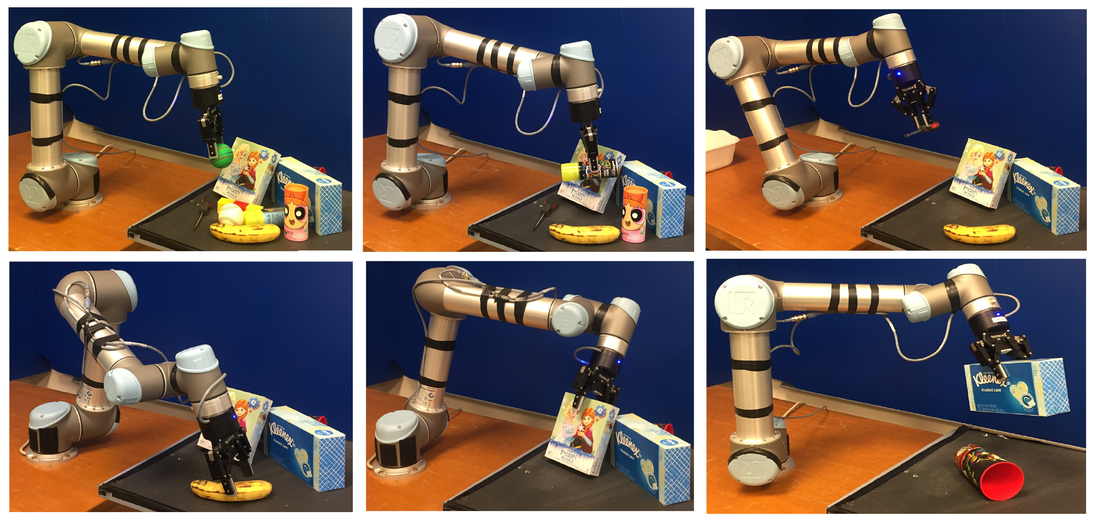

Comparing task specific hands

Currently I am primarily focused on my PhD thesis entitled "Comparison and Evaluation of Common Hands with Self-designed Task-Specific Robotic Hands in context of Grasp Learning". In this research we are developing a robotic module which includes primarily UR5 robotic arm, Kinect and Barrett Hand.

In the design process we are developing new task-specific robotic hands by capturing human motions, analyzing those motions for key points using dual-quaternions, designing articulated systems with multiple end-effectors based on those extracted keypoints and 3D printing those hands. In the later stage we are going to compare those hands with commercially available robotics hands such as Barrett or Robotiq hands.

The comparison process is based on tasks such as pick-and-place task which includes several sub-tasks such as detecting and recognizing objects by ORK or OUR-CVVH, calculating grasping points of those objects by GraspIT or probabilistic learning techniques/ Deep Learning methods or Convolutional Neural Network. The grasp points will be evaluated based on task for pick and place by constraint aware and collision aware motions generated by MoveIT.

In the design process we are developing new task-specific robotic hands by capturing human motions, analyzing those motions for key points using dual-quaternions, designing articulated systems with multiple end-effectors based on those extracted keypoints and 3D printing those hands. In the later stage we are going to compare those hands with commercially available robotics hands such as Barrett or Robotiq hands.

The comparison process is based on tasks such as pick-and-place task which includes several sub-tasks such as detecting and recognizing objects by ORK or OUR-CVVH, calculating grasping points of those objects by GraspIT or probabilistic learning techniques/ Deep Learning methods or Convolutional Neural Network. The grasp points will be evaluated based on task for pick and place by constraint aware and collision aware motions generated by MoveIT.

Creating DATASET

We are in the process of huge database of daily-life objects. Most of object models are generated by ORK capture or STRANDs database creator by rotating lazy-susan table and capturing depth and RGB images, segmenting them and registering them. The ground-truth pose information are also been stored. For extending the database we have borrowed several models from several open popular repositories such as Amazon (32)

CMUIKEA (124), Pacman (26), TUM(TechnicalUniversityMunich)[ 54], TUW(TechnicalUniversityWien) (33), UniversitaCaFoscariVenezia (86), WillowGarageDataset (46),BerkleyDataset (132), KIT (55), PCLdataset (45), Washington dataset (86), YCB (Yale-Columbia-Berkley)dataset

CMUIKEA (124), Pacman (26), TUM(TechnicalUniversityMunich)[ 54], TUW(TechnicalUniversityWien) (33), UniversitaCaFoscariVenezia (86), WillowGarageDataset (46),BerkleyDataset (132), KIT (55), PCLdataset (45), Washington dataset (86), YCB (Yale-Columbia-Berkley)dataset

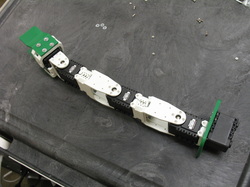

Barrett Hand Controller

Developed a controller for barrett hand in Gazebo which will receive enumerated commands exactly like real hardware and perform motion based on the Sensor_msgs/JointState messages in Gazebo. Also a different controller has been implemented which can receive joint_trajectory_msgs and convert them to sensor_msgs/JointState which is highly beneficial in running the hand in MoveIT.

ATOM

In the Robotics and Artificial Intelligence lab at Indian Institute of Information Technology, Allahabad I had developed ATOM (Autonomous Testbed for Mobile Manipulation). In the final stages it was capable of navigating in the lab and pick and place small objects. The whole system was based on ROS architecture.

| ijais12-450765.pdf | |

| File Size: | 1057 kb |

| File Type: | |

TurtleBot

My Turtlebot is highly influenced by the module 'Turtlebot' created by Willow Garage.The main components of it are the iRobot Roomba and Microsoft Kinect.The plates and the standoffs are constructed by local resources.

It is been driven by 'turtlebot' stack of willow garage using ROS.currently it is capable of autonomous navigation and localization in the robita lab using 2d grid.

It is been driven by 'turtlebot' stack of willow garage using ROS.currently it is capable of autonomous navigation and localization in the robita lab using 2d grid.

Path Planning by Maze Routing

A comprehensive technique to plan path for a mobile robot with nonholonomic constraints through maze routing technique has been presented. Our robot uses a stereo

vision based approach to detect the obstacles by creating dense 3D point clouds from the stereo images. ROS packages have been implemented on the robot for specific tasks of providing: i) Linear and angular velocity commands, ii) Calibration and rectification of the stereo images for generating point clouds, iii) Simulating the URDF (Unified Robot Description Format) module in real time, with respect to the real robot and iv) For visualizing the sensor data. For efficient path planning a hybrid technique using Lee’s algorithm, modified by Hadlock and Soukup’s algorithm has been implemented. Different path planning results have been shown using the maze routing algorithms. Preliminary results shows that Lee’s algorithm is more time consuming in comparison with other algorithms. A hybrid of Lee’s with Soukup’s algorithm is more efficient but unpredictable for minimal path. A hybrid of Lee’s with Hadlock’s algorithm is the most efficient and least time consuming.

vision based approach to detect the obstacles by creating dense 3D point clouds from the stereo images. ROS packages have been implemented on the robot for specific tasks of providing: i) Linear and angular velocity commands, ii) Calibration and rectification of the stereo images for generating point clouds, iii) Simulating the URDF (Unified Robot Description Format) module in real time, with respect to the real robot and iv) For visualizing the sensor data. For efficient path planning a hybrid technique using Lee’s algorithm, modified by Hadlock and Soukup’s algorithm has been implemented. Different path planning results have been shown using the maze routing algorithms. Preliminary results shows that Lee’s algorithm is more time consuming in comparison with other algorithms. A hybrid of Lee’s with Soukup’s algorithm is more efficient but unpredictable for minimal path. A hybrid of Lee’s with Hadlock’s algorithm is the most efficient and least time consuming.

| path_planning_through_maze_routing_for_a_mobile_robot_with_nonholonomic_constraints.pdf | |

| File Size: | 776 kb |

| File Type: | |

TurtleBot ARM

The 4-DOF arm in the turtlebot has been constructed by the AX-12 motors from Biloid package.The AX-12 motors are been interfaced by the Dynamixel_motor stack of ROS. Later the arm is been transformed in a drawing robot which is capable of drawing letters on papers from Cartesian poses by MOVEIT.

BILOID

Using the Microsoft Kinect and the Biloid a module has been created where the kinect captures the skeleton image of a person and drives the Biloid robot as a motion imitation of the user.

JONTRO: Outdoor Mapping

Jontro was created by me in my Mtech.It was based on behavior based architecture. In its primary level it can navigate in its environment through teleportation where the user stays in a safe place and control the robot wirelessly which is situated in the robotic environment. In its later stages in can capture various images of the environment and categorize the captured images in proper database. The captured images will be later used as the training dataset for recognition of the environment. The categorized images will create a map of the environment by creating various nodes in the map of the environment. By recognizing the images of the environment the robot can determine its present location or node in its environment.

| development_of_a_mobile_robot_for_image_acquisition_through_teleoperationt.pdf | |

| File Size: | 268 kb |

| File Type: | |